How to choose an AI server in 2026: Architecture, hardware, and power estimation

Key takeaways:

- AI server choice strictly depends on workload type: for Training, interconnect bandwidth (NVLink) and VRAM capacity are critical; for Inference, low latency and tensor performance (TOPS/TFLOPS) matter most.

- Graphics processors (GPU) are the foundation of AI. Selection is built around accelerator architecture (for example, NVIDIA Hopper/Blackwell or AMD Instinct) and its interaction with CPU.

- Storage «Bottleneck» is solved only with NVMe SSD arrays using PCIe 5.0/6.0, capable of continuously feeding data to GPU.

- Power consumption of one modern AI server (based on 8x GPU) can reach 10-12 kW, requiring liquid cooling systems (DLC).

1. Task split: Training vs Inference

Before selecting a configuration, you must define AI workload type. A server for building a neural network is fundamentally different from a server for running it.

- Training: The process of creating a model (LLM, image generation). It requires massive compute power, huge VRAM capacity for storing weights, and ultra-fast communication between multiple GPU.

- Inference: Using a trained model to answer user requests. Here response speed (Latency), energy efficiency, and parallel processing of thousands of small requests are more important. For inference, servers with 1-4 less powerful GPU (for example, NVIDIA L40S or RTX Ada Generation) are often sufficient.

2. Key AI server components

AI server architecture is built around graphics accelerators, but without proper balance of other components, expensive GPU will stay idle waiting for data.

Graphics accelerators (GPU)

GPU are the heart of artificial intelligence. Unlike CPU with dozens of cores, GPU have tens of thousands of small cores, ideal for parallel matrix computation.

- VRAM capacity: To run open LLM (for example, Llama 3 70B), about 40-80 GB VRAM is required (depending on quantization). For training models with billions of parameters, servers with total memory from 640 GB are needed (for example, 8x NVIDIA H100 80GB or B200 configurations).

- Interconnect: If a server has more than 2 GPU, standard PCIe communication becomes a bottleneck. You should select platforms with high-speed direct data exchange technologies between graphics cards (NVIDIA NVLink / NVSwitch or AMD Infinity Fabric).

Central processing unit (CPU)

In an AI server, CPU handles data preprocessing, task orchestration, and network traffic routing.

- Requirements: It is recommended to install two high-frequency processors (Intel Xeon Scalable 5th/6th generation or AMD EPYC 4th/5th generation) with a large number of PCIe lanes for direct NVMe storage and network adapter connectivity.

System memory (RAM)

- Sizing rule: System RAM volume should be at least 2x total VRAM volume of all installed graphics cards. If the server has 8 GPU with 80 GB each (total 640 GB VRAM), you will need 1.5 to 2 TB DDR5 system memory with ECC.

Storage subsystem (Storage)

Machine learning requires continuous reading of massive datasets. Using HDD or slow SATA SSD is unacceptable — GPU will be idle up to 90% of the time.

- Standard: Only enterprise U.2/U.3 or E1.S/E3.S NVMe SSD with PCIe 5.0 support. Array read speed should be from 30 to 60 GB/s.

3. Comparison table: Choosing GPU for AI workloads (Current for 2026)

| GPU model | Memory capacity (VRAM) | Primary use case | Power consumption | Key features |

|---|---|---|---|---|

| NVIDIA B200 / GB200 | 192 GB (HBM3e) | Training heavy LLM (GPT-class) | 1000 W+ | Extreme bandwidth, liquid cooling. |

| NVIDIA H100 / H200 | 80 GB / 141 GB | Universal: Training and complex Inference | 700 W | Industry standard for data centers. |

| AMD Instinct MI300X | 192 GB | Training and Inference of large models | 750 W | Cost-effective alternative with massive memory buffer. |

| NVIDIA L40S | 48 GB (GDDR6a) | Inference, video analytics, 3D rendering | 350 W | Does not require NVLink, fits perfectly into standard PCIe servers. |

4. Network infrastructure (Networking)

If a single server is not enough, AI clusters are combined in data centers. For synchronizing neural network weights between servers, standard 10G/25G Ethernet is not sufficient.

You will need adapters (SmartNIC / DPU) supporting 400GbE or 800GbE, as well as InfiniBand or RoCE v2 (RDMA over Converged Ethernet) for direct access to other servers' memory bypassing CPU.

5. Cooling and power: Data center physical limits

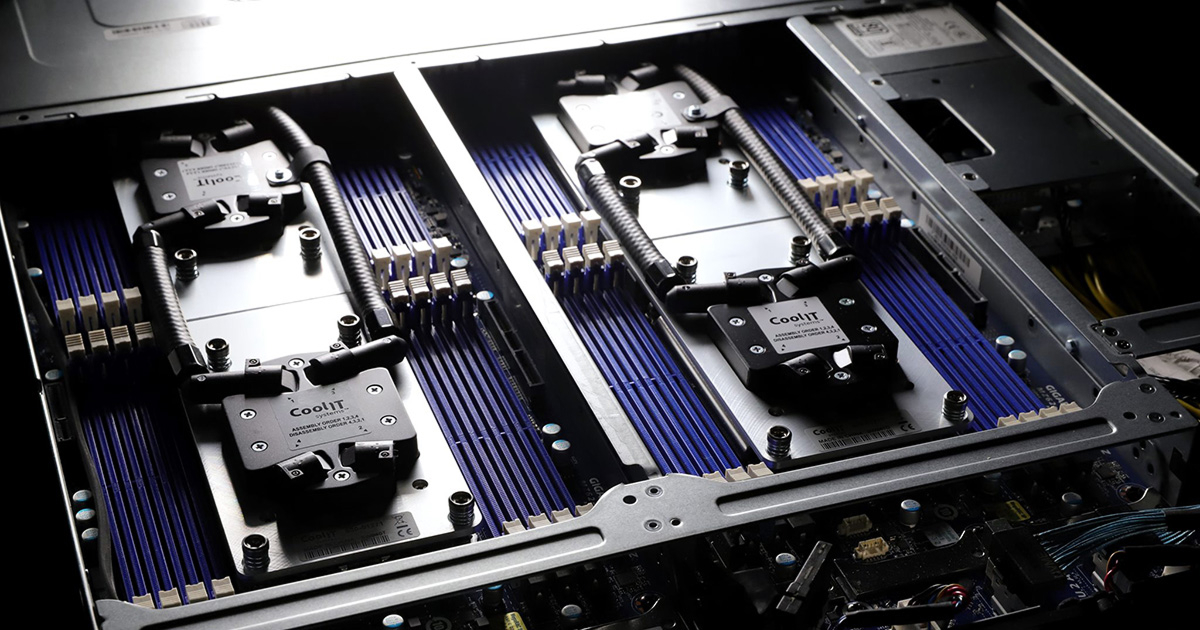

A modern 4U-8U server equipped with eight top-tier graphics accelerators consumes from 8 to 12 kilowatts (kW) of electricity.

- Power: Ensure that your data center rack can handle such load for one unit block. Reinforced power supplies will be required (from 3000 W each with N+N or N+1 redundancy).

- Cooling: Classic air cooling (cold-aisle air) is no longer enough for flagship solutions (Blackwell class). Consider server platforms with factory-integrated DLC (Direct Liquid Cooling) — direct liquid cooling on CPU and GPU chips.

Conclusion

Choosing a server for artificial intelligence is about finding bottlenecks. There is no point in buying eight of the most expensive NVIDIA or AMD graphics accelerators if you save on NVMe storage or network cards that cannot provide required data feed speed. For starting and inference testing, focus on servers with 1-4 mid-range cards (L40S / RTX 6000 Ada). For training foundational models, consider only HGX architectures with NVLink interconnect and cooling headroom.